Recommended

WORKING PAPER

Practical Lessons for Phone-Based Assessments of Learning

Schools have been closed in most countries since March and—in many places—they’re likely to stay closed until September. This means kids will have been out of school for almost half a year, with potentially catastrophic consequences for their education and their welfare.

Since the onset of the COVID-19 pandemic, much has been written about potential learning loss, and a number of studies are measuring student engagement in distancing learning programs. But until now (as far as we know), no one has tried to measure directly whether kids are gaining or losing learning during school closures. With an existing learning crisis and acute educational inequality, it’s vital to rapidly generate data on which children are most at risk of falling irreparably behind and dropping out of school; which children are likely to be most disadvantaged by upcoming high stakes exams; and which distance learning programs are most effective.

Learning assessments are usually administered face-to-face, so it’s a challenge to figure out how to do them remotely. Fortunately, a number of research teams are rising to the challenge. We know of at least 7 teams in 10 countries that are planning or have started phone assessments. If you’re part of a team engaged in similar efforts, please get in touch with Sharmili Satkunam ([email protected]) so your study can be added to this list.

Using lessons from early movers in Botswana, together with the extensive literature on face-to-face oral assessments, we’re concluding, “yes,” we think it is possible to measure learning by phone. We’ve published some preliminary principles and discussion in a new working paper. Below is a quick summary—five tips which we hope are helpful for those embarking on their own efforts.

1. Keep children safe and put them at ease. Many surveys ask adults about children but very few gather data directly from children. Consent procedures and enumerator training modules need to adapt to make sure that children are not harmed—for example, supervisors could monitor a sample of calls to make sure enumerators are interacting appropriately with children and youth. It’s also important to establish rapport with adult phone owners and the children who are being assessed. Respondents—both adults and youth—are often nervous in this time of global and local crisis.

2. Check the validity of the assessment. In an ideal world, phone-based assessments should be validated against face-to-face tests, for example by comparing with tests that used similar tools shortly before schools closed. In Botswana, the correlation between simple “problems-of-the-day” (which are relatively easily adapted to a phone-based assessment) and more comprehensive assessments in March 2020 was 69 percent.

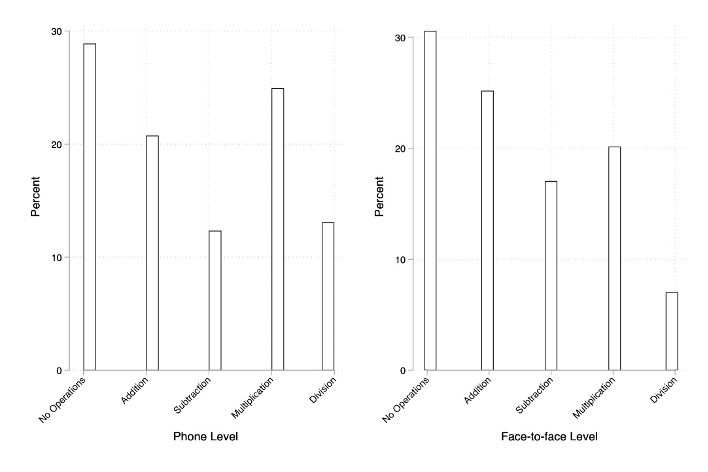

3. Be aware that assessments test more than the skill you are testing. Oral assessments are a combination of the skill you are testing (e.g., subtraction) with language skills (i.e., the child’s comprehension of the question). In the absence of visual cues available in face-to-face testing, phone assessments are even more of a test of these skills. Accounting for this is important, especially in settings where different respondents speak different languages and may comprehend the language of instruction orally either better or worse than they read it. The speed of response is also informative. Tracking whether the child immediately answers the question correctly or if they answer it correctly after first making a mistake provides one indicator of content mastery.

4. Think innovatively about how to adapt assessments for phone calls. Oral assessments often have visual stimuli or text for children to read, which may be difficult to adapt to testing by phone. But there are ways around this. Phone-based assessments can incorporate text—for example, even where the most basic phones are used, enumerators could send text messages and ask children to read them aloud. For example, in our pilot in Botswana, we sent a word problem in mathematics by text and asked the child to read it aloud (assessing literacy) and then solve it (assessing numeracy).

5. Keep it short. The Early Grade Reading Assessments typically takes 15 minutes per child and our pilot in Botswana is taking 15–20 minutes. Any longer is tough for children, especially those living in noisy, crowded environments. And short can be beautiful—in Botswana, high frequency, micro assessments have proven to be cheap and informative. Performance in a simple “problem of the day” has a strong relationship with performance in a more extensive oral assessment after 15 days (Figure 1). These were face-to-face assessments, but they demonstrate that simple tests that are possible to administer by phone could indicate levels of learning loss or gain and provide quick and useful information for policymakers and schools systems attempting to mitigate the adverse health and educational effects of school closures.

Figure 1. The relationship between student answers on “problem of the day” on the last day of class and average learning levels for the whole class after 15 days.

See the paper for more detail.

This is a young literature and many teams will learn and share a lot over the coming months. We hope to help compile and share those messages. Hopefully these initial principles from our own pilot tests and the oral testing literature can help teams start off on the right foot. Since these efforts are new, it’s important to note that—while they might help identify which students are most disadvantaged by the COVID-19 crisis—phone assessments should not be used for high-stakes decisions around the future of individual students. But, in the longer term, if we can develop consistently reliable methods and tools to measure learning by phone, they have the potential to disrupt the way we measure learning, by enabling both high-frequency diagnostics and more cost-effective ways to assess learning outcomes.

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.