Recommended

Blog Post

Four Investments Funders Can Make to Raise the Bar on AI Evaluation

In April 2025, several of this blog’s authors —along with other colleagues—published “An AI Evaluation Framework for the Development Sector.” The piece provided a standardized approach to evaluating the thousands of generative AI tutors, health assistants, and farmer coaches that have emerged. It aimed to address a core challenge: “evaluation” means different things across disciplines, with technologists focusing on model performance and safety, and economists on whether AI improves development outcomes such as literacy, crop yields, or income. In practice, organizations must assess four levels of evaluation in low- and middle-income countries (LMICs):

(1) whether the AI system performs as intended,

(2) whether the product engages and retains users,

(3) whether it positively impacts users’ thoughts and behaviors, and

(4) whether it improves a development outcome.

The four stages emerged from observing nonprofits that were building AI-powered products as part of the Agency Fund’s AI for Global Development Accelerator. While grounding the framework in real-world use cases was essential, we questioned what it might miss beyond the accelerator’s cohort and whether it would hold up to academic scrutiny across disciplines. More broadly, we worried that without a credibly validated set of evaluation techniques, implementers and funders would have mismatched understandings of what constituted a high quality evaluation, and ultimately produce studies that were not comparable. To address this, CGD convened a working group of 30 experts in December 2025—spanning computer science, psychology, gender, economics, and implementation from the Global North and South. The goal of the convening was threefold:

- Align on a common vocabulary for what constitutes evaluations of generative AI applications

- Produce a playbook that provides guidance on how to execute evaluations of generative AI powered interventions

- Establish a basic standard of evaluations organizations should meet

Here’s a summary of what happened over the two-day convening, the big takeaways, and what’s happening next.

Attendees spanned disciplines such as computer science, psychology, gender, economics, and evaluation.

What the experts did

- Reviewed a draft playbook as pre-work: Prior to the convening, experts redlined a draft of the playbook on how to conduct evaluations of AI products, adding corrections, nuance, and examples of both real-world applications and frontier thinking.

- Debated the four evaluation levels: Experts both discussed whether the four levels were comprehensive as a set, and what should constitute each individual level. They were also asked to sort the proposed evaluation techniques by feasibility and importance.

- Debated cross-cutting themes across levels: These sessions focused on how issues such as safety and gender manifest across the different evaluation levels, and how evaluation approaches should account for them.

Key topics experts highlighted during the convening

- Minimum viable evaluations (MVEs): Experts agreed that defining a minimum threshold of data collection and analysis, for each level of evaluation, is a useful exercise. Many development organizations and governments lack the resources to pursue frontier evaluation techniques, and MVEs would help set a basic standard. While specific metrics will vary based on a product use case and context, there are some generalizable routines and measures that have been standardized by industry. For example, there are established practices for measuring user retention and safety that all organizations should meet. Establishing minimum standards could accelerate learning and enable funders and governments to set expectations when procuring AI products.

- Effects on subgroups: Even if AI products deliver benefits to the average user, they can have uneven and even harmful effects on subgroups such as women and vulnerable populations. Evaluations must account for this heterogeneity across all four levels of evaluation, and experts recommended integrating metrics that track the most vulnerable product’s user’s needs. Early on, such risks are poorly understood and not always fully mitigable. Yet in the fast-moving generative AI space, a purely precautionary stance risks stifling innovation. The playbook will attempt to balance this by recommending adaptive product development and evaluation approaches that permit experimentation while requiring stringent safeguards once threats or negative effects have been exposed.

- Theory of change: Evaluating generative AI interventions across multiple levels requires a wide range of metrics, but evaluators need a way to see how these measures fit together. Evaluation specialists can develop tunnel vision and become expert at tracking their specific metric, while missing the larger picture. A well-established theory of change provides this structure, mapping the pathway from model performance to product engagement, intermediate proxies, and ultimately outcomes. The theory of change provides the conceptual glue that ties the evaluation levels together and is particularly important for AI interventions where specialists in many disciplines are working together.

- Formative research and an understanding of context: While the playbook focuses on how to conduct evaluations of a generative AI powered product like a chatbot tutor, these products operate within important contexts and systems. For example, the way an education ministry rolls out the product might affect user perception and attitudes towards the product. While the playbook will not focus on techniques to conduct formative research, experts pointed out the need to more explicitly highlight its importance before and during product development.

Working group co-chair Temina Madon presenting on how to evaluate user engagement

What’s next

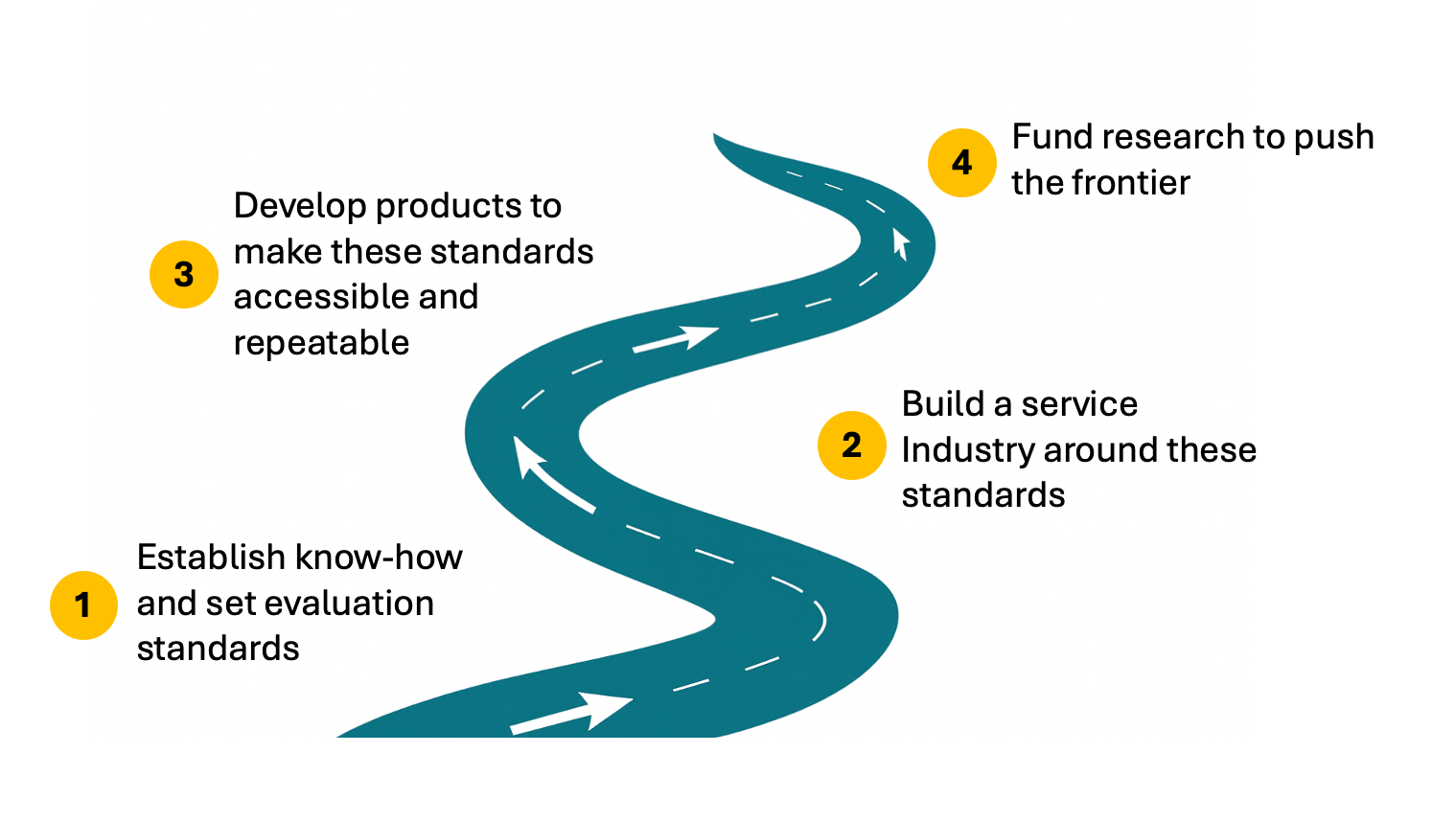

- Engage with funders and governments: The London convening aligned academics and technical implementers around an initial, multi-party-validated version of the evaluation playbook. The next step is to engage funders—many of whom are experts themselves—whose input is critical. Broad adoption will also require funders and governments to work with vendors and grantees to clarify evaluation needs and set expectations, a process this framework is designed to support.

- Publish a living playbook in early Q2: After incorporating further input from experts and funders following the London convening, we plan to publish a “living” playbook as a public resource, updated continuously as generative AI evolves. IDInsight will engage implementers to ensure it is pragmatic and easy to navigate, while CGD will establish a steering committee—initially with members from The Agency Fund, IDInsight, and CGD—to solicit feedback, add new material, and curate updates to the playbook.

- Use and refine across accelerators: One of the ways to pressure test the content and value of the playbook is to support its deployment across accelerators. Technical standards and guidance don’t matter if they are not adopted and refined. Please reach out if you would like to use the playbook in your accelerator.

A playbook defining how to evaluate generative AI products is only a first step. Techniques specific to the needs of sectors like education and health will need to be developed. Guidance on agent evaluations for the development sector will also need to be developed, and we will need to identify a suitable form factor and repository for disseminating the results of AI evaluations. Additionally, building a strong ecosystem for high-quality, comparable evaluations will require much more—including funding universities and research organizations to build AI evaluation capacity and working with funders to align incentives and expectations. Stay tuned for more.

Topics

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.

Thumbnail image by: Authors