Recommended

Markus Goldstein: Welcome to The CGD Podcast. I'm Markus Goldstein, Vice President and Senior Fellow at the Center for Global Development. Joining me is Han Sheng Chia, one of CGD's fellows and the director of our new AI initiative, and Temina Madon, the CEO of the Agency Fund. CGD and the Agency Fund have been partners in an accelerator to support and study the use of AI and development service delivery, as well as, on a broader effort, to define new ways of evaluating AI-powered interventions.

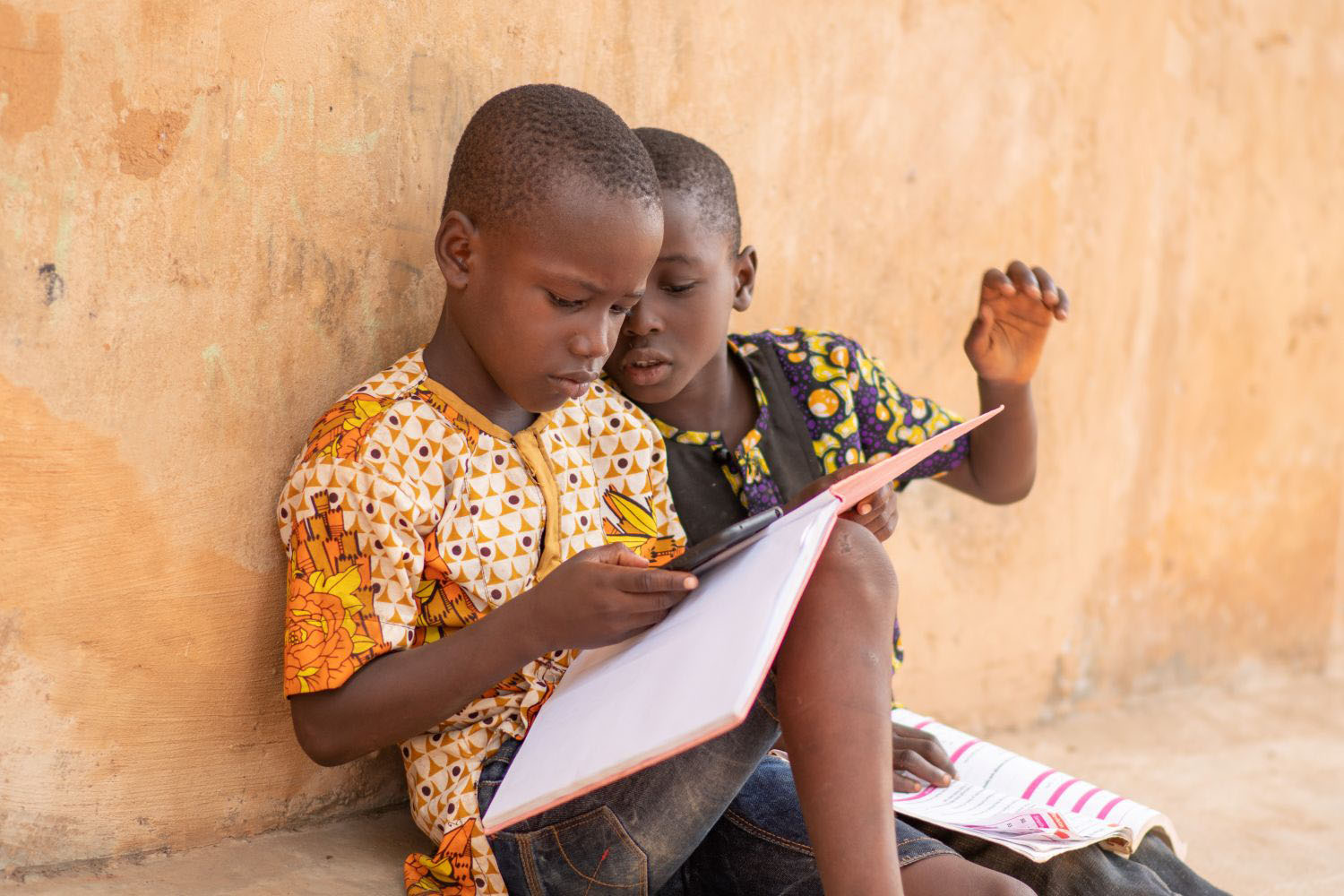

Today, we're talking about AI in its rapidly growing footprint in the world of development. Everything from nutrition coaching to advice to farmers to tutoring children. We hosted a recent CGD event looking specifically at how and, more importantly, why, to evaluate these types of interventions.

Today, we're going to have a broader discussion on AI and development, what promising avenues we're seeing, how we should be thinking about safety and alignment, and what needs to be done to nudge the sector in a better direction. There's a lot of interest in AI, like genuine interest. How should we think about this in the development space? Where's it being used? What does the picture look like? What and who are driving adoption?

Temina Madon: Thanks so much, Markus, for hosting us. I would start by saying that civil society, or nonprofits and tech companies, are probably at the leading edge, and they're doing a lot of experimentation. I've seen less engagement and direct delivery of AI products by government, although that could change. We are seeing adoption in the agriculture sector, getting advising to farmers, getting them weather information, and more supportive insights to help them manage their farming businesses.

We've definitely seen a lot in health. Many donors go to health first because it does have a digital backbone in many cases. We're seeing everything from chatbots that support moms who are expecting a newborn, to nutritionist coaches for people who may be undernourished and need advice on how to supplement their diets, to advice on how to adhere to TB and bots that coach you on maintaining your adherence to medication regimes.

We've also seen a bit of an explosion in livelihoods. The reality of no jobs for most of the young people entering the labor market today is one that AI threatens to exacerbate. Everybody's afraid that AI is going to knock out jobs, but also, some people think AI may play a role in. It may help coach people toward creative livelihoods that they themselves develop through entrepreneurship. It's still yet to be seen whether those approaches are going to be effective. I would say it's been a mixed bag.

Who's involved in AI is a pretty lopsided picture. Most of the foundation models, and even open-source and fine-tuned models, are from the Global North. They are in English language or other languages that you find on the internet. What that means is that there are a lot of people not involved, not driving adoption, not getting access. I think a lot of funders are trying to address this by making sure that AI is in the languages and reflective of the cultures of the majority of people on the planet.

Han Sheng Chia: Just a bit on what Temina shared, I think at CGD, we are hoping to expand a conversation even further beyond, "Hey, look how powerful these technologies are, and we should extend their access across the world to does the powerful technologies and the increasing access actually improve development outcomes," because we know that powerful technology and access alone does not necessarily lead to improvements in infant mortality or increases in agriculture yields. I think that's where our collaboration with the Agency Fund is, supporting nonprofits, make that final step, deploy interventions powered by generative AI that, hopefully, moves those outcomes we care about that is so critical.

Markus: Temina, what is exciting you the most in the intervention space?

Temina: We've seen the biggest effect sizes, I believe, in health and education. In health, at least at the level of user engagement with health information services, when you make it conversational, people are more likely to listen, to ask questions, to participate, to engage. With maternal health bots that are coaching these new moms through safe delivery and neonate survival, you sometimes see two, three times the engagement of women. They're taking agency, they're asking questions, they're asking for help, they're getting referrals. That relies on having a functional health system around you.

An AI bot where there's no affordance to access care is pretty useless, but where you have those affordances, we're starting to see people really getting engaged. There actually was an RCT during COVID, Susan Athey as a co-author, suggesting that in Ghana, access to COVID information that was conversational in two-way was much more attractive to users than these information blasts that are static, the sorts that people are used to.

It's too early to say if this is going to avert health morbidity and mortality. I think these AI conversation bots are giving people a sense of connection to the health system, and awareness, and engagement around their health. I think similarly in education, we've seen these almost implausible effects of AI tutors who are coaching kids.

I guess my spicy take is that what I'm actually most excited about are AI products that are built for people, not programs. I don't love the idea of, "Here is my education program, how do I run AI into it to make it work better," but rather, "Here is a teacher who has to manage a classroom of 70 kids. She has to teach at multiple levels. She is pretty hassled. How do I improve her experience in her work?"

Both supporting her emotionally through a coaching bot or a supportive bot, a conversation that helps her, but also through providing, maybe, cutting-edge techniques that we are learning are more effective for managing a classroom or for improving numeracy. I really advocate a deep focus on the user and solving their pain points as opposed to coming in with a central planner's perspective, and asking how we get to numeracy straight off the bat.

Markus: You raised this issue that, if there's a functioning health system, then this is good for connecting. What if there's not a well-functioning health system? We know absenteeism is a pretty significant issue. I did some work back in the day, which showed that absenteeism was often for training, and there just wasn't a second nurse at this maternal health clinic. It's not just not showing up for work. It's a thinly stretched system, stretched even thinner when you try and do capacity. Absenteeism with a system that has gaps, is it possible that we'd see AI help fill some of those gaps too early?

Temina: I'll jump in, but I'd love to hear what Han has to say. I do think we can fill those gaps to some extent, but we do have to identify the pain points. If a nurse is absent, why is she absent, and how do we support her being present at the facility?

I do think that bots for frontline workers should be a government's priority or even development bank's priority because that is where the government is investing massive resources. So much money goes into hiring and paying those nurses, not the community health workers, sadly, but where they're salaried, they're also an investment that the government's making. We need to help those people do their jobs and leverage that investment by providing supportive co-pilots.

You raise an important question, which is, you see AI companies and chip companies telling Global South governments that they need to build data centers, and they need electricity to build those data centers. Is that ethical when the local clinics and hospitals don't have electricity for safe delivery of a baby? We do have to talk about where our priorities lie. Is it electrification for AI chatbots, or is it electrification to provide light in clinics where women are delivering babies? I think there is a bit of a moral dilemma there.

Markus: I do wonder if there's an imperative to build a data center, and there's private capital. We might actually get a positive externality. We might get electricity for firms, and for clinics, and schools. Han, over to you. What are you most excited about?

Han: I'm most excited by AI's potential to rapidly increase the scale of intervention delivery and to drive down the cost of traditional methods of delivering interventions. For example, at extension work, having an extension officer go out on the hot sun, go farmer to farmer, can be quite costly, can be very, very time-consuming, and so one of the questions that is most exciting for us is, can a digital extension worker deliver over a voice call or delivered over WhatsApp or SMS chat, allow the delivery of information to farmers much more inexpensively? Are we able to cover just a much wider geography at a fraction of the cost? I think that's incredibly exciting.

Then you have maybe the same pool of human extension workers on the backend, reading and tracking the bot's responses to the farmers and correcting when there may be errors. I think that's an incredible multiplier of your existing workforce that I'm quite excited by, but to come back to the real point about what if there just isn't existing infrastructure? If we were to stick with the farmer example, what if there were no agro dealers in the area that could supply the necessary inputs, fertilizer, seeds, et cetera? AI is not going to fix that.

I think this comes back to the idea that we often study in development economics, that there might be multiple constraints to addressing a development challenge. AI could address one of those constraints, but that's why we should not be thinking of AI as a silver bullet, but really evaluating if ultimately, we are achieving the outcomes, because there might be other constraints that AI is just unable to help a user overcome.

Temina: I have heard people say that we need to find ways to connect AI to the physical world, and that probably means AI along the supply chain. Yes, deliver support to people who are end users of government services. Yes, deliver it to the frontline workers, empower them to deliver in-person services better, but also connect it to the pharmaceutical supply chain and the vaccine cold chain, and connect it to the network of agro dealers, or pharmacies, or other physical entities in the world that deliver goods and services.

Yes, that takes a systems approach, and I think, Markus, that's what you were alluding to. We do need to think of where this inserts along the supply chain. Some of the cases, people are not as excited about enabling the querying of complex logistics and transport data [chuckles] to understand where rapid diagnostic tests or vaccines are in a country, but that probably is as important an intervention. We've certainly seen that in the private sector, that there are big uniforms doing AI logistics for warehousing and transport and getting goods to retail. We probably need to do the same thing in the development sector.

Han: Yes, absolutely. I think that's where we have to go, this combination of AI interventions plus a platform play. What I mean here is, you can imagine a digital agronomist providing advice to farmers, saying, "I've detected a pest based off the photo that you have uploaded. I recommend pesticide ABC, and here are six agro dealers in your vicinity that I think have stocks and supplies for the pesticide." This combination of connecting you to a marketplace, connecting you to supply chains, I think, is absolutely where we have to go next.

Markus: I was reading an article recently about why AI isn't going to be a super accelerator for growth, and one of the arguments was, it's just going to make bottlenecks more obvious [chuckles] or more painful, to your point. Here, I'm hearing a more optimistic argument, which is we're going to find these bottlenecks and pain points, and then we got to bring the next-gen solutions to start dealing with them.

There may be limits, I think, ultimately with physical infrastructure. If there's no road to deliver those pesticides, Han, then at the end of the day, all the AI in the world, unless we get it building roads, isn't going to help us. I'm intrigued to see what the second-generation problems are going to be. To your point, the systems approach is, I think, going to be critical there.

Temina: Markus, what are you most excited about?

Markus: [chuckles] I think you both captured things that I'm really excited about. The empowerment aspect that you alluded to, Temina, personally excites me, and I think that could be a game-changer for a lot of folks. I worked with some psychologists on this business training, which was helping people tap into their personal initiative. The program's been scaling since I first worked on it pretty significantly. There's the day-to-day constraint of tapping into that, which I myself experience. [chuckles]

A lot of times it's just asking you a question, "Markus, what are you afraid of today, and what are you going to do about it?" To me, bringing that approach to helping people develop their agency, that's super exciting.

Han: I think that is the question of our time, which is, does the use of generative AI enhance or remove our agency? Does it enable us to introspect, to be reflective, and to unlock our potential, or does it substitute away from our own self-directed learning and critical thinking, and introspection, and then just hand us the answers? We're already seeing some worrying signs in the education space. There was a study that was run in Turkey that provided an AI chatbot to students with no guardrails.

What you saw was that students used it as a crutch, and it did not drive self-directed learning. Students just wanted the answer. They did not challenge themselves, and they performed worse than students who were provided a chatbot with guardrails that didn't give the answer away.

Markus: Han, you opened this question about guardrails and thinking about how we design AI to make the world a better place instead of a worse place. There's lots of discussion on this in the Global North. There is a fair amount in the Global South. How is the question on what guardrails should look like and what's important to think about when we talk about development? What's the development cut on this question?

Han: Let me give a very brief history of this question of, how do we get generative AI models to align to human interests? The conversation so far has been dominated by very important conversations about safety. You want an AI that doesn't encourage the user to engage in self-harm. You want the AI to not tell you incorrect answers during medical emergency or a sensitive period of your life. To me, that's table stakes.

When I say table stakes, I don't mean that it's necessarily easy to execute. There are a lot of incredible researchers who are working very hard to get AI to be more safe. It's table stakes to me because you can create a perfectly safe AI, and yet it does not generate the development outcomes that we care about. You can have an AI that very rarely tells you to engage in self-harm but doesn't necessarily exhibit pedagogical best practices when it's tutoring the user, that still gives the answer away.

To me, these traits that we want in development, best teaching practices, like exhibiting concise and empathetic agronomic advice to farmers, these traits are additional and built on top of a base layer of safety. That's where I think we need to take a further step beyond the conversation of safety.

Temina: What I would add is a shout-out to some of the people in the Global South who have really been pioneers in thinking about safety in context. Tattle and Tarunima is an organization in India that's doing academic networks that work in this space. Part of it is understanding that what is appropriate advice in one culture. For example, "I've had a fight with my husband. How do I resolve this?" Assertion may be appropriate in some cultures. In other cultures, there's a need for stepping back and not always asserting ourselves. I, myself, as a Western feminist, would find that odious in my own life, but I live in an environment which gives me that affordance to feel that it's odious to not assert myself.

For people who don't live in my society, I need to provide advising and coaching that is safe for them. I think there's a component of safety, which is around culture, social norms, also language, how we use language, when it's appropriate to use "I" versus "we." So much of our Western Global North culture is baked into these models at the most foundational layers, and it may take a lot of work to develop different kinds of technologies, other foundation models that don't come out of the Global North that better suit the diversity of Global South contexts that exist.

I guess the other ethical issues I would raise are ensuring that the voices of people who are engaged in social service delivery and in the use of social services is brought into these models. What do I mean by that? I mean that the language that we often use to train models and even fine-tune models often comes from the written word. We're getting more and more data now with philanthropic investment of spoken word, more and more language models that are spoken, speech-to-text, text-to-speech, and speech-to-speech in local languages.

Beyond capturing vernacular, for every sector, we need to understand the kinds of questions people are asking. What kinds of help do they want? The example that I've gotten from Rikin at Digital Green is that an understanding of rust in the context of wheat versus rust in the context of other plants versus rust in the context of a rusty nail. Different models deal with that differently, and it depends what they've been exposed to, what kinds of language.

If I'm a farmer, a small holder, I probably need my vernacular, my sector-specific language, represented in these models. This is less about ethics, but it's more about inclusion and making sure that we capture the voices and the needs and the asks of people who we want to benefit with this set of tools.

Han: I guess an open question here is who's going to be responsible for building these products? Should the responsibility lie with the major tech companies at the foundational model level? What is their relationship to the nonprofits or the governments that will be the ones discovering these six definitions of rust that Temina just brought up? At the end of the day, should we be developing products that live in two separate worlds?

For example, the agriculture-specific product that has that use case in this particular region in India, where they refer to rust in this very specific way, or should that information be fed back to the tech companies, such that at the foundational layer level, this unique cultural and contextual insight is then incorporated into the foundational product? I think that's an open question, and that relationship between the local actors and the major tech companies is yet to be defined.

Markus: I was reading an article this morning about how Google DeepMind is making progress on treatment for cancer. It sounded like a somewhat fundamental ways. I don't see anything about malaria or other tropical diseases. I'm in agreement with everything you've both said, but it's not free. I do think the resource allocation is skewed to where the profits are.

I'm worried that this doesn't happen because of how resources are being allocated. I'm not sure that concessional financing or philanthropic financing is going to move fast enough to do this before it's either too late or just add on, because I think you're both raising fundamental questions and issues. If it's later, it's just going to be a layer that's put on top of whatever we're using. These issues are, I think, a little bit more core reactions. Tell me I shouldn't be so sad.

Temina: No, I think you're right. It took us a long time to tax carbon. We're still not taxing it at the level we need to. What if any company that has taken more than $100 million in investment has to tax every hit to its API? An API is an application programming interface. It's the portal between a set of software that a company might offer, like an AI model, and the user, who, in our case, might be a nonprofit building an AI-powered chat product.

What if we had an API tax and we started charging companies that are generating wealth and generating revenues, some tax, a public contribution that goes into building products for the rest of the world? You don't, as a company, need to build the product, but you contribute through your revenue to somebody building that product.

I do want to give a little plug for Google. They do have a TB cough detector that they've built a model that was trained on human sounds related to health, coughs, sniffles, et cetera. My understanding is that others have done this as well. There is application to neglected diseases, to tropical diseases. As you say, it requires investment. Development is never free. Development is an investment we make in ourselves. Somebody has to pay for it. It's important to get it in the right path now. We didn't do it with electricity, with gasoline, until it was probably too late for the environment.

Han: On the flip side to the tax, you could also have a credit where the major tech companies, if you're contributing a data set in a minority language, for example, or you're using their models for socially beneficial purposes, for every unit to be giving a credit back to the user, whether it's a tax or whether it's a per unit usage credit. These are novel approaches. These are new or even the traditional corporate social responsibility investments that are a tiny, tiny fraction of all the private investment that's going into this space.

Markus: You both have been involved in this accelerator. Tell us a little bit more about the basic idea, and then I'm excited to hear about some of the experiences you've seen.

Temina: CGD, the Agency Fund worked in partnership with OpenAI, providing credits to help nonprofits think about how to incorporate AI into their service delivery. We worked in three domains with eight nonprofits, healthcare, specifically maternal and child health, agriculture, and specifically small holder agriculture, and education. I would say our interest from the Agency Fund's perspective in going into this accelerator was not necessarily to come out with a bunch of success stories.

Our interest is in to understand the struggle that nonprofits face in adopting this technology and bending it toward development outcomes, and really even in being able to evaluate and test, and learn. We wanted to understand the frictions, what is it going to take for the social sector to mould this new technology to its purpose? I think we've seen a lot of interesting and, in some cases, painful frictions arise. Obviously, the scarcity of data for fine-tuning or even for evaluation of models has been an issue.

Han: I think one of the investment thesis for the accelerator was that I think all seven or eight of nonprofits draw from some existing evidence base that suggests that their intervention, prior to AI, had some demonstrated literature on effectiveness, that their pre AI version, whether it was a human delivering a maternal health coaching to pregnant mothers, or whether it was an AI tutor delivering a phone call, a math lesson, they all had some indication that their programs were effective prior to AI.

One of the big questions was, can AI be used to help potentially improve effectiveness, drive on cost expense scale? I think even within the cohort, you saw a handful that were digitally made. Prior to AI, they were already delivering maybe a chatbot, just without an AI component. Then we had more traditional analog first organizations who never had a digital engagement of their user, for example.

I think having a mix of both digitally native and analog nonprofit has been very instructive on what it would take to get the development sector at large on more and board with AI applications.

Markus: Any lessons there?

Temina: Definitely a lot of lessons. I think a first is some NGOs are jumping straight into evaluating the impacts of an AI product or service without getting it to stability first and without understanding whether people are using it first. There is this rush to generate evidence about AI's effectiveness. The reality is, you really need many iterative cycles of product development before you make that investment.

Another thing we've seen is there are very well-established metrics, benchmarks, and strategies for evaluating AI model performance. There's even tooling for it, but the tooling requires expertise in software development. We're seeing that a lot of organizations are vibe checking and vibe evaluating the model-based products they're building, rather than actually using the tooling that exists.

There's things like DeepEval and Langfuse for model observability, but also for model evaluation testing, whether the model is behaving as you expect it to. We would like to see more nonprofit adoption of these tools. We'd like to see adoption of a user funnel. If you're now using a digital product with AI and you're delivering it to a user, can you show me the stages of that product as the user moves through the experience from onboarding to first use to engagement and retention?

Can you show me how many people convert to each stage as they move through the funnel? We're just not seeing enough inspection of how a product is getting adopted, how many people are adopting it, who's adopting it, and how do we refine the product to increase meaningful adoption?

Markus: It's like this blind faith in technology. If I'm an analog organization, I know how to do a program. I know if people aren't showing up to my health clinic, something's going on, and I'm going to go find out what it is. Now I bring out a bot, and of course, somehow I think it's just going to be great, and everyone is going to use it, and I'm going to assume that. It's this switch to this over-optimistic sense that the technology will just be fine.

Temina: I think so. The irony in that is that we have so much more visibility with the digital product. You can actually see whether people are using it. You can actually see what the model is doing. We can record the inputs and outputs. It's ironic that in this context, where there is something digital that is less physical, it's much easier to monitor and trace, people don't have the tooling to do that, and they really need it.

Markus: Oh, gosh. Leaving data on the table, it's a cardinal sin.

Han: One of the things that worries me about a rush towards impact evaluation without tracking how the model is performing or without tracking how users are engaging is that, at the end of the day, there is no one singular AI. These products move and change every day. You're on knobs and buttons that you can press and twist and make the AI perform differently and to increase engagement or reduce engagement. When we don't track this earlier stage performance, a model or an engagement, what are we really doing an impact evaluation on at the end of the day?

One day, we may find that an impact evaluation boosts crop yields or boosts learning, and then the next day, after we've changed the knob or an AI company has tweaked the dials a little bit, you find that the AI produces a different impact. I think we've all seen enough impact evaluations making claims about what AI can and can't do. The reality is that there's very little specification on what exactly the AI system is under the hood and how it's been developed and designed. I think we need much more evaluation and reporting of what the product is actually doing and how users are actually engaging or dropping off.

Markus: We're getting close to the end of our time. I wanted to ask one of the CGD standard podcast questions. You both have worked in a pretty diverse set of organizations and experiences. I was wondering, can you share a memorable story from your work?

Temina: I go back to some work we did with BRAC. When I was at the Center for Effective Global Action, we had BRAC. BRAC is one of the world's largest NGOs. They're a non-governmental organization established by Bangladeshis, headquartered in BRAC, but now with programs around the world. They really focus on empowerment, which is, I think, in great alignment with our work at the Agency Fund. We had BRAC as a partner for research, and BRAC researchers would come to the US and teach us and learn from us, and then we would go to Bangladesh and some of the countries in Africa where they were working.

I remember visiting a woman who was part of what would become the graduation program. This was maybe around 2009, maybe 2010. Sitting in the small, not even a room, it was a little space where a woman lived with her three kids. It was tiny. She was an entrepreneur, and she had been endowed with coaching from someone at BRAC who cared about her enough to invest in her ability to navigate.

She had become a trainer in fabrics, and their bed was made out of a big pile of fabrics that she slowly-- That was her inventory, that was her startup grant. They were selling these at market, and it was not an easy life at all. This woman had been afforded a sense of dignity from the investment that was made in coaching and supporting, and listening.

I don't want to say that AI [chuckles] replaces that. What I hope is that AI supports the coach in the graduation program to be a little bit more responsive and maybe nurturing, or maybe inspires the coach in that program to think a little bit more with empathy for that woman's situation. Whatever it is, I hope that AI helps us make those investments in each other because that sense of dignity of a woman who has been given a second chance at life, to me, that's quite compelling. I don't know if this woman had removed herself from extreme poverty yet. This was where I was doing some qualitative work, but that's a memory that sticks with me.

Markus: You're giving me flashbacks to visiting the adolescent girls in Uganda that were part of BRAC's program. One of the roughest sets of questions I've ever gotten in a meeting was from those adolescent girls. Yes, I'm with you. Han.

Han: My story is from 2017, 2018, when I was an implementer of a cash transfer program, and I was responding to a hurricane in the Caribbean. One of the big pain points that I experienced was not distributing the cash transfer itself, but they lay on targeting, finding, and enrolling households that had been affected by a hurricane that had their homes damaged and lived below a particular poverty line. That process of targeting and enrolling households was incredibly time-consuming, very slow, and I felt like I was letting down the families that we were trying to distribute cash transfers to it. Took us something like six months to identify, enroll, and pay just 5,000 households.

After that experience and prior to the world of generative AI that we now live in, when predictive AI was just making its way into the development sector, I reached out to Google and reached out to professors at UC Berkeley to ask, "Surely the machine learning techniques are getting advanced enough such that we can use satellite data and other big data sources to identify based on satellite imagery, which households had their roofs blown off by a hurricane."

Beyond that, whether an urban setting, whether there are mud roads or tar roads, whether there's urban planning, whether roofs are made of grass or made of tin, surely all of those factors could form a composite index of wealth and poverty. Surely a machine could help us identify regions that were poorer than others.

Over the next few years, we partnered to be able to use machine learning techniques to predict, not just damage, but household wealth and poverty. In 2021, 2022, during the COVID pandemic, I worked with a number of governments, including the government of Togo and the government of DRC, to be able to deliver cash transfers to 200,000 households in weeks. We went from, in 2017, taking six months to identify, enroll, and pay just 5,000 households to several 100,000 in weeks.

That's what motivates me with this new crop of AI technologies to figure out how we can just go to scale at a fraction of the cost and applying these technologies to proven interventions like cash transfers. That's my favorite memory of AI and development.

Markus: Thank you both for a really interesting discussion. I've learned a lot about thinking about using AI to help us boost programs to scale and increase speed, and also to think about the deep potential for possibly helping people tap into their agency and increasing it. I've heard cautionary notes about building mechanisms now to make sure that we don't leave parts of the world behind and exclude them from what could be a really powerful technology. You can learn more about CGD's work on AI and development, as well as the other things we work on, on our website.

Temina: Thank you, Markus. This was a lot of fun.

Han: Thank you, Markus.

Topics

DISCLAIMER & PERMISSIONS

CGD's publications reflect the views of the authors, drawing on prior research and experience in their areas of expertise. CGD is a nonpartisan, independent organization and does not take institutional positions. You may use and disseminate CGD's publications under these conditions.